Hey there,

If you’ve been waiting for “real” AI agents that feel more like a coordinated team than a single chatbot, Anthropic just gave us an interesting glimpse of that future with Agent Teams. Let’s unpack what this actually means for your work.

Let’s get into it.

Anthropic’s new Agent Teams feature lets you run multiple Claude agents in parallel, each owning a different part of a workflow, while coordinating with each other to finish an end‑to‑end task.

Instead of one long, sequential prompt chain, you structure a team: for example, a research agent, a coding agent, a tester, a reviewer, and a deployer that pass work and feedback between them.

Anthropic is positioning this inside the Opus 4.6 release, currently in research preview for API users and subscribers, so it’s aimed squarely at builders, not casual chat users (yet).

88% resolved. 22% stayed loyal. What went wrong?

That's the AI paradox hiding in your CX stack. Tickets close. Customers leave. And most teams don't see it coming because they're measuring the wrong things.

Efficiency metrics look great on paper. Handle time down. Containment rate up. But customer loyalty? That's a different story — and it's one your current dashboards probably aren't telling you.

Gladly's 2026 Customer Expectations Report surveyed thousands of real consumers to find out exactly where AI-powered service breaks trust, and what separates the platforms that drive retention from the ones that quietly erode it.

If you're architecting the CX stack, this is the data you need to build it right. Not just fast. Not just cheap. Built to last.

At a high level, you define roles and responsibilities, then wire them into a workflow so different agents can run simultaneously or in structured stages.

Anthropic describes this as splitting a complex job into sub‑tasks, where each agent specializes-one might analyze requirements, another writes code, another runs tests or checks style, and another summarizes or packages the result.

Internally, the agents share a common context and artifacts (like a codebase or doc set), so they can iterate together without you manually copy‑pasting between steps.

The system is designed to mimic a small engineering team: individual responsibility, shared workspace, and coordination to reduce back‑and‑forth with the human in the loop.

To show this off, Anthropic used an agent team of Claude instances to build a working C compiler from scratch with minimal human intervention.

Different agents handled tasks like designing the architecture, writing specific modules, integrating components, and running tests on a shared code repository.

The key takeaway for developers is not “AI can build a compiler,” but “AI can manage a long‑running, multi‑file, multi‑step software project without you manually orchestrating every step.”

That’s a big jump from the usual “ask for a snippet, paste into your code editor, and debug yourself” workflow most coding assistants still rely on.

Agent Teams arrive as part of Anthropic Opus 4.6, the company’s latest top‑tier model.

This upgrade also extends the context window to around one million tokens, making it feasible for multiple agents to work across large codebases or document sets without constantly losing context.

On the business side, Anthropic has been rolling out an enterprise agents program that aims to embed agentic workflows into everyday tools and processes—think coding, document workflows, and integrations with platforms like PowerPoint and other productivity suites.

Agent Teams sit right in the middle of that strategy: they’re the “workflow brain” that enterprises can point at specific processes, from software delivery to internal research sprints.

For devs and small businesses, Agent Teams are interesting because they move beyond single‑step automation into something closer to a virtual project team.

A small shop could, in theory, set up an agent pipeline where one agent drafts feature specs, another scaffolds code, another writes tests, another refactors for clarity, and a final agent prepares deployment notes or change logs.

Anthropic’s recent workflow guide talks about three practical patterns for agents—sequential pipelines, parallel workers, and evaluator‑optimizer loops—which map nicely onto Agent Teams.

Put differently, you’re not just “calling the API”; you’re designing a mini‑organization of agents that follow these patterns to hit latency, quality, and cost targets.

Here are a few concrete scenarios where Agent Teams could be useful once they’re generally accessible:

End‑to‑end feature delivery

One agent interprets tickets and requirements, another generates code and tests, another runs a review against style and security checklists, and another writes documentation or release notes.Research → Decision pipelines

A research agent gathers sources, a synthesis agent builds a brief, an evaluator agent checks for quality and bias, and a final agent drafts an executive summary or recommendation memo.Content production workflows

For creators, you could imagine a planning agent generating outlines, a drafting agent writing scripts or blog posts, an editor agent tightening language and checking facts, and a distribution agent generating platform‑specific versions.

In each case, your job shifts from manually prompting a single model to defining roles, guardrails, and success criteria for an entire automated team.

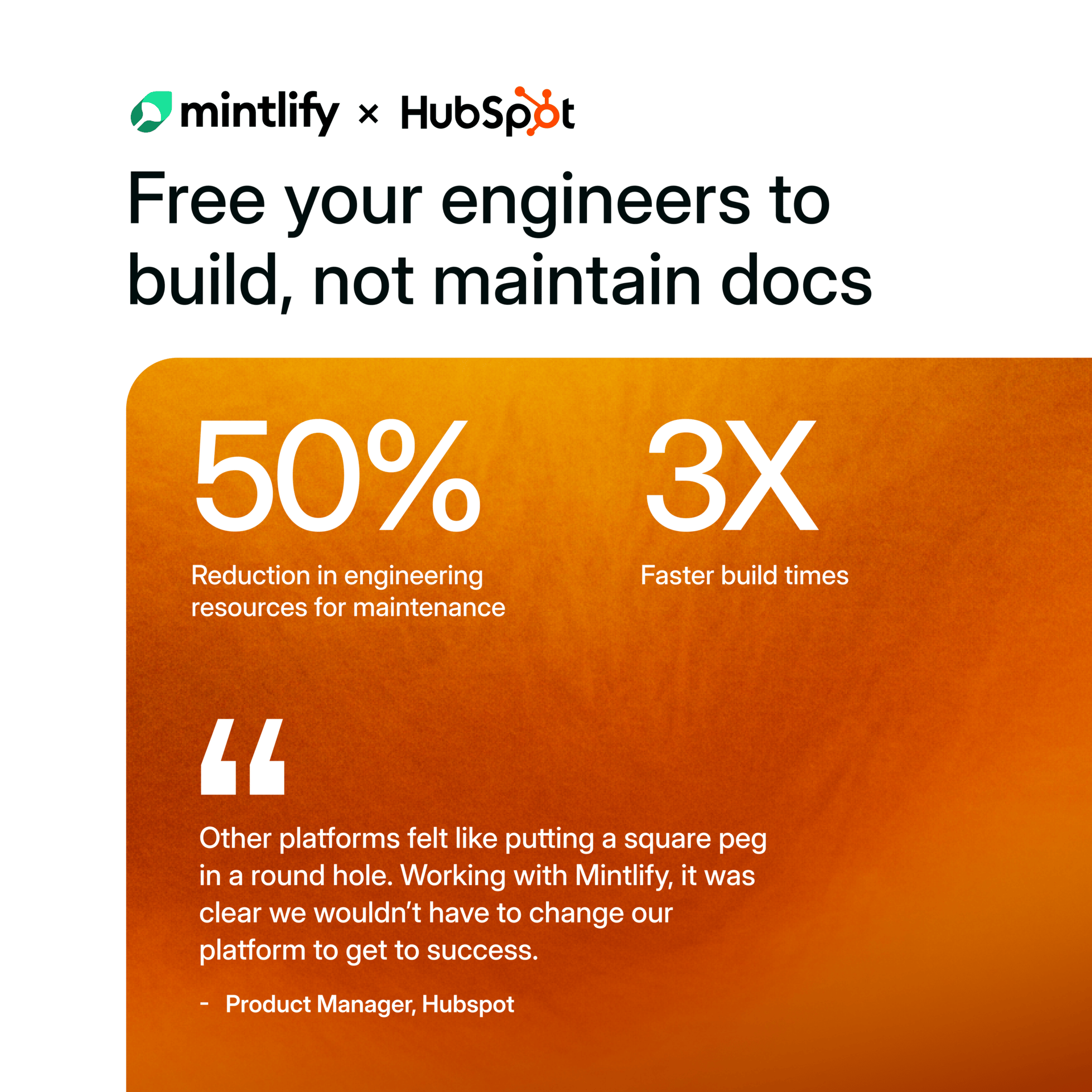

See Why HubSpot Chose Mintlify for Docs

HubSpot switched to Mintlify and saw 3x faster builds with 50% fewer eng resources. Beautiful, AI-native documentation that scales with your product — no custom infrastructure required.

Limitations and Things to Watch

Right now, Agent Teams are in research preview, so they’re aimed at early adopters comfortable with rough edges, not yet a polished “click‑and‑go” feature for everyone.

Running multiple agents in parallel also raises practical concerns: higher cost per workflow, more complex failure handling, and the need for solid evaluation and monitoring to keep things from silently going off the rails.

Anthropic’s own guidance stresses robust evals, failure modes, and production monitoring as core design requirements when you build with agents, not nice‑to‑have add‑ons.

If you’re thinking about adopting Agent Teams, it’s worth planning for test suites, logging, and human‑in‑the‑loop review up front rather than bolting them on later.

If You Want to Experiment

If you’re already in the Anthropic ecosystem, keep an eye on the Opus 4.6 documentation and any early‑access programs for multi‑agent features.

For everyone else, the concepts Anthropic is pushing—parallel agents, clear workflows, evaluator‑optimizer patterns—can still be applied using existing open‑source multi‑agent frameworks or your own orchestration layer, even before you get official Agent Teams access.

A simple first step: map one of your existing multi‑step tasks (like “take a ticket from idea to PR”) into roles, then ask yourself, “Which parts could a specialized agent handle, and how would they talk to each other?” That’s essentially the thinking behind Agent Teams.